Universal Correspondence Network

This work will be presented at NIPS 2016 at the oral session on Wed Dec 7th 4:40PM - 5:00PM

Abstract

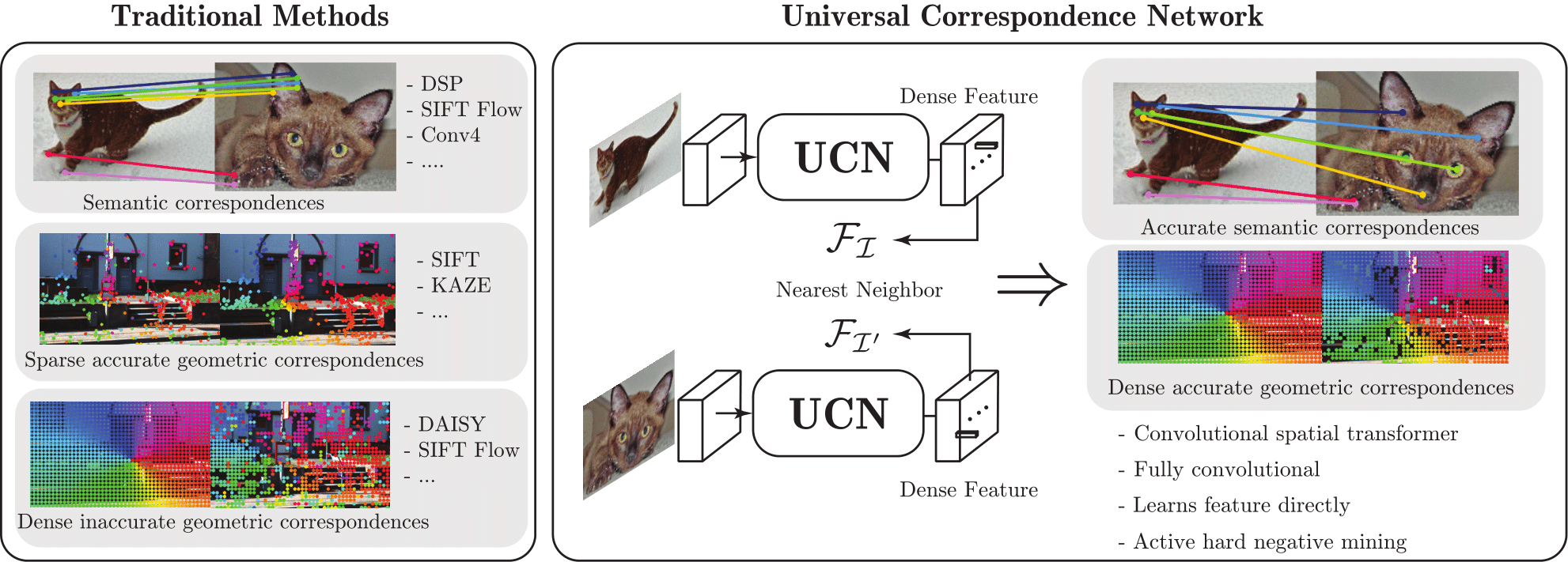

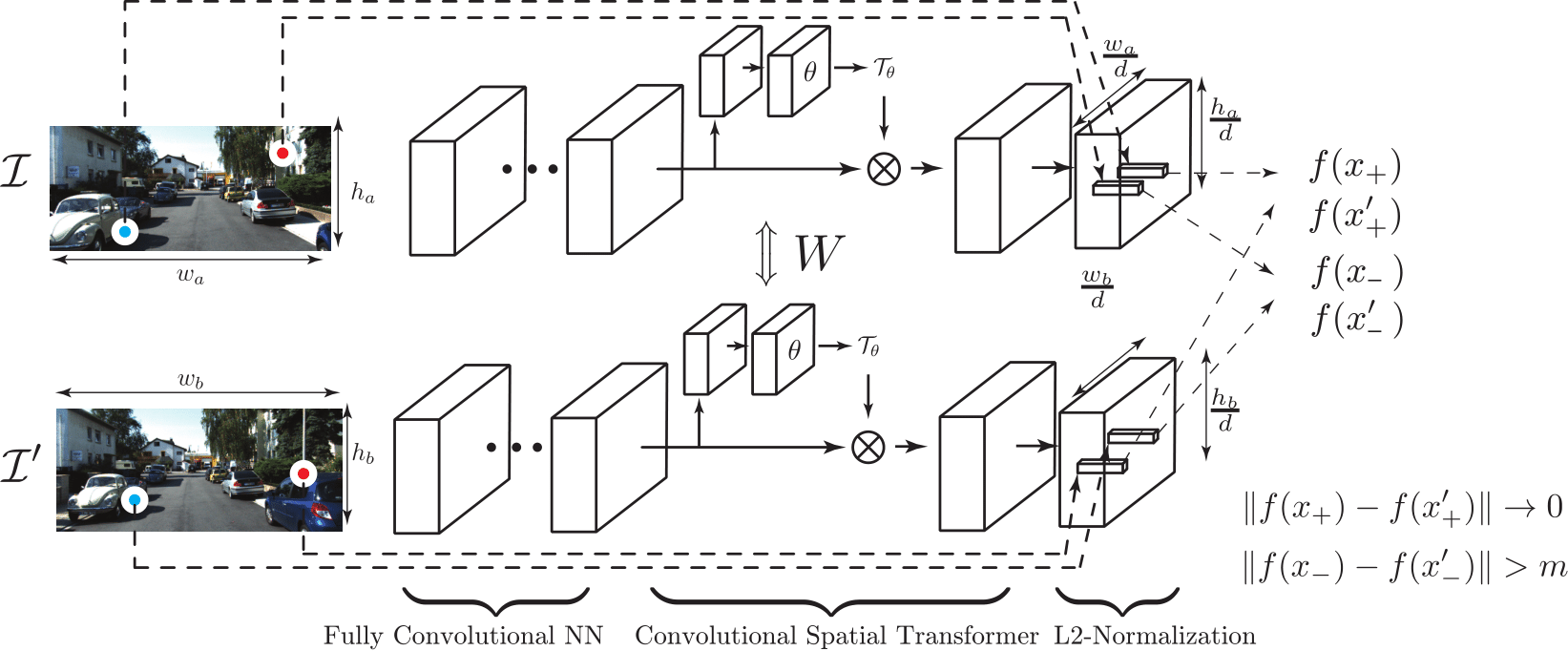

We have proposed a novel deep metric learning approach to visual correspondence estimation, that is shown to be advantageous over approaches that optimize a surrogate patch similarity objective. We propose several innovations, such as a correspondence contrastive loss in a fully convolutional architecture, on-the-fly active hard negative mining and a convolutional spatial transfomer. These lend capabilities such as more efficient training, accurate gradient computations, faster testing and local patch normalization, which lead to improved speed or accuracy. We demonstrate in experiments that our features perform better than prior state-of-the-art on both geometric and semantic correspondence tasks, even without using any spatial priors or global optimization. In future work, we will explore applications of our correspondences for rigid and non-rigid motion or shape estimation as well as applying global optimization.

Citing this work

If you find this work useful in your research, please consider citing:

@incollection{choy_nips16,

title = {Universal Correspondence Network},

author = {Choy, Christopher B and Gwak, JunYoung and Savarese, Silvio and Chandraker, Manmohan},

booktitle = {Advances in Neural Information Processing Systems 30},

year = {2016},

}

- Please follow the instruction on the NEC-Labs website to download the source code

- Paper

- Slides

- Blog post about the UCN and the FAQ

- Trained network weights

- googlenet-conv-spatial-transformer trained on CUB2011, and prototxts

- googlenet-conv-spatial-transformer trained on Flowweb, and prototxts

- googlenet-conv-spatial-transformer trained on VOC2011, and prototxts

- googlenet-conv-spatial-transformer trained on SINTEL, and prototxts

- googlenet-conv-spatial-transformer trained on KITTI Flow, and prototxts

- Supplementary Materials

- CUB2011 Dataset with Filtered Annotations

- VOC2011 Correspondence Annotations

Patent

US Patent 15045 (449-460) END-TO-END FULLY CONVOLUTIONAL FEATURE LEARNING FOR PATCH SIMILARITY