Weakly Supervised 3D Reconstruction with Adversarial Constraint

JunYoung Gwak, Christopher B. Choy, Animesh Garg, Manmohan Chandraker, Silvio Savarese

Abstract

Supervised 3D reconstruction has witnessed a significant progress through the use of deep neural networks. However, this increase in performance requires large scale annotations of 2D/3D data. In this paper, we explore inexpensive 2D supervision as an alternative for expensive 3D CAD annotation. Specifically, we use foreground masks as weak supervision through a raytrace pooling layer that enables perspective projection and backpropagation. Additionally, since the 3D reconstruction from masks is an ill posed problem, we propose to constrain the 3D reconstruction to the manifold of unlabeled realistic 3D shapes that match mask observations. We demonstrate that learning a log-barrier solution to this constrained optimization problem resembles the GAN objective, enabling the use of existing tools for training GANs. We evaluate and analyze the manifold constrained reconstruction on various datasets for single and multi-view reconstruction of both synthetic and real images.

Links

Proposed Method

Overview

Neural network-based 3D reconstruction requires a large scale annotation of ground-truth 3D model for every 2D image, which is infeasible for real-world application. In this paper, we explore relatively inexpensive 2D supervision as an alternative for expensive 3D CAD annotation.

We propose to learn 3D reconstruction from 2D supervision as following:

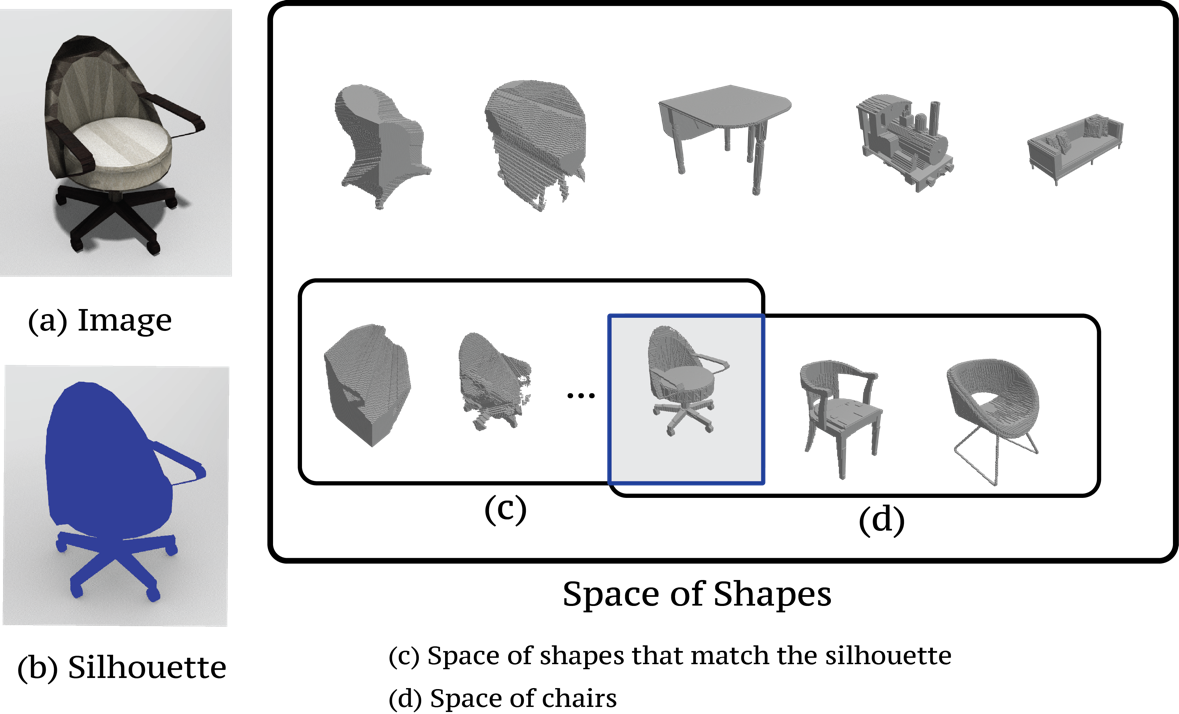

- Raytrace Pooling: Inspired by visual hull algorithm, we enforce foreground masks as weak supervision through a raytrace pooling layer that enables perspective projection and backpropagation ((c) in the figure above)

- Adversarial Constraint: Since the 3D reconstruction from masks is an ill posed problem, we propose to constrain the 3D reconstruction to the manifold of unlabeled realistic 3D shapes that match mask observations ((d) in the figure above)

Raytrace Pooling

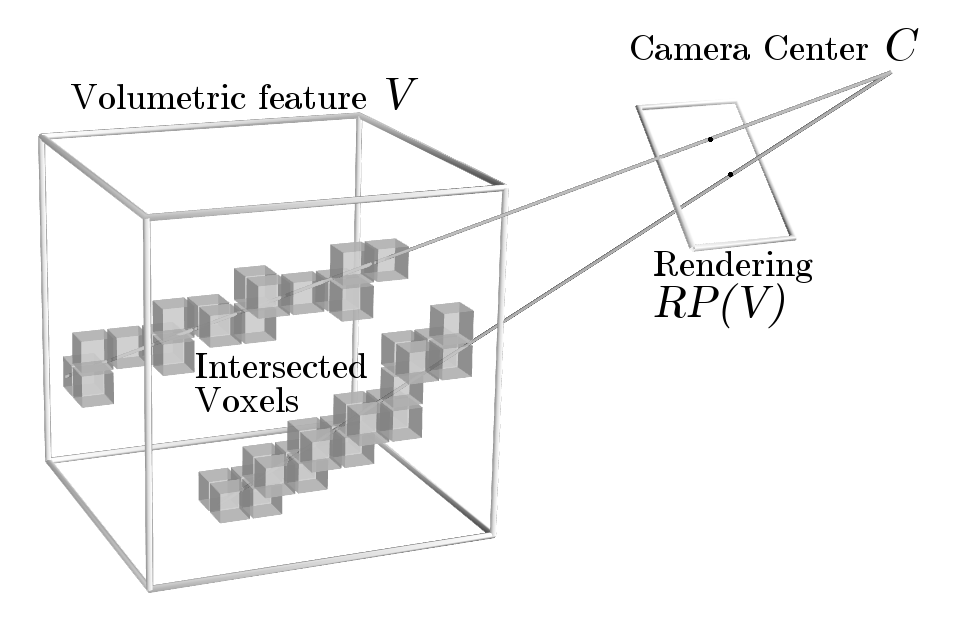

In order to learn 3D shapes from 2D masks, we propose a backpropable raytrace pooling layer. Raytrace pooling layer takes viewpoint and 3D shape as an input and renders a corresponding 2D image, maxpooled across voxels hit through each ray of the pixel, as shown in figure above. This layer efficiently bridges the gap between 2D masks and 3D shapes, allowing us to apply loss inspired by visual hull. Moreover, this is an efficient implementation of true raytracing using voxel-ray hit test. Unlike sampling-based approaches, our implementation does not suffer from aliasing artifacts or sampling hyperparameter tuning.

Adversarial Constraint

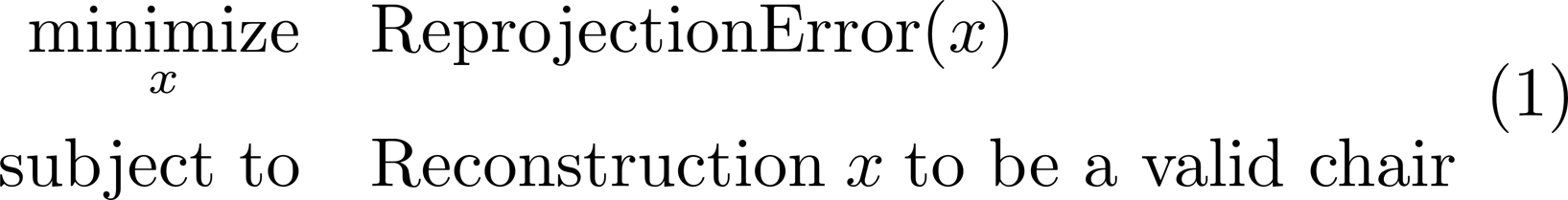

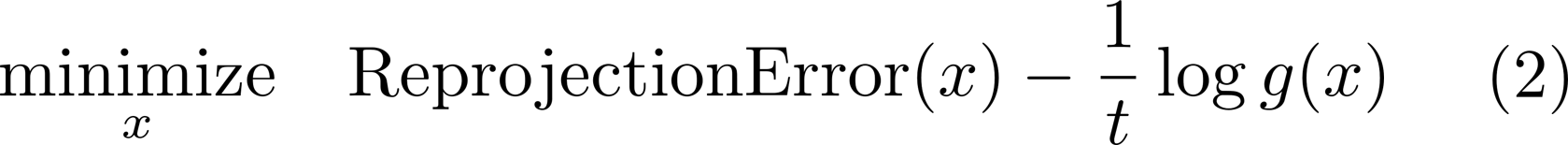

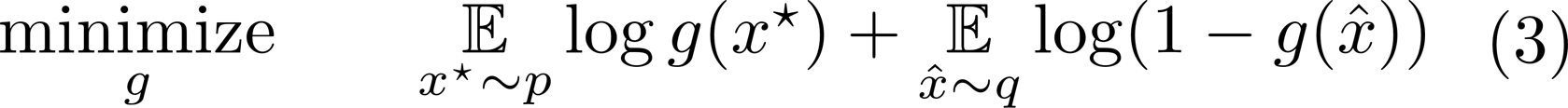

2D mask constraint, unlike full 3D shape constraint, cannot enforce concavity or symmetry, which may be crucial for 3D reconstruction. Therefore, we apply additional constraint so that the network will generate a "valid shape" as in equation (1) above. In order to solve equation (1), in this paper, we demonstrated that a constrained optimization problem can be tackled using GAN-like network structure and loss. Equation (1) can be re-written as equation (2) using log barrier method where g(x)=1 iff reconstruction x is realistic and 0 otherwise. We observed that the ideal discriminator of GAN g*(x), which outputs g*(x)=1 iff reconstruction x is realistic, is analogous to the penalty function g(x). Therefore, we train the constraint using GAN-like adversarial loss, as in equation (3).

Citing this work

If you find our work helpful, please cite it with the following bibtex.

@inproceedings{gwak2017weakly,

title={Weakly Supervised 3D Reconstruction with Adversarial Constraint},

author={Gwak, JunYoung and Choy, Christopher B and Chandraker, Manmohan and Garg, Animesh and Savarese, Silvio},

booktitle = {3D Vision (3DV), 2017 Fifth International Conference on 3D Vision},

year={2017}

}