Introduction

|

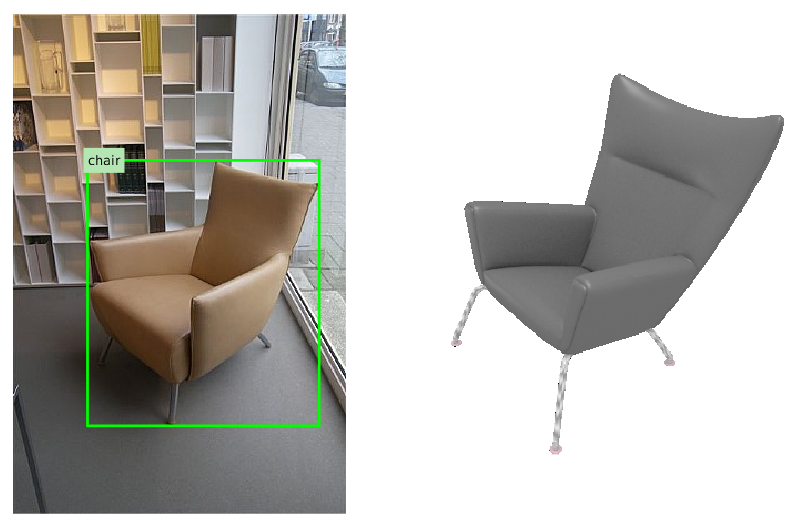

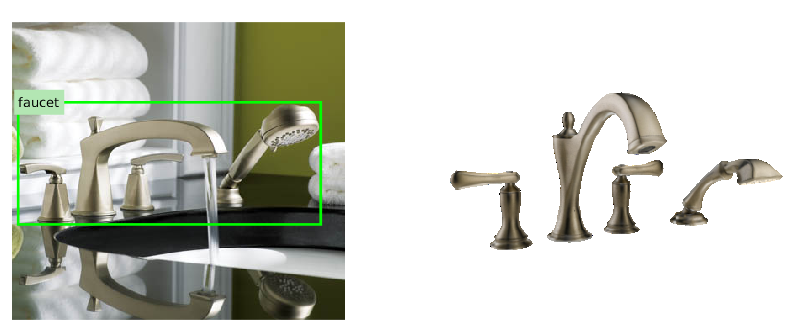

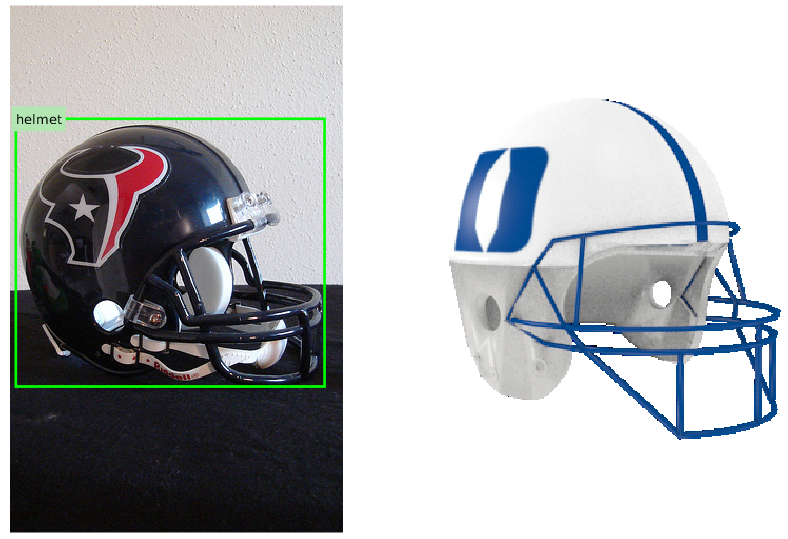

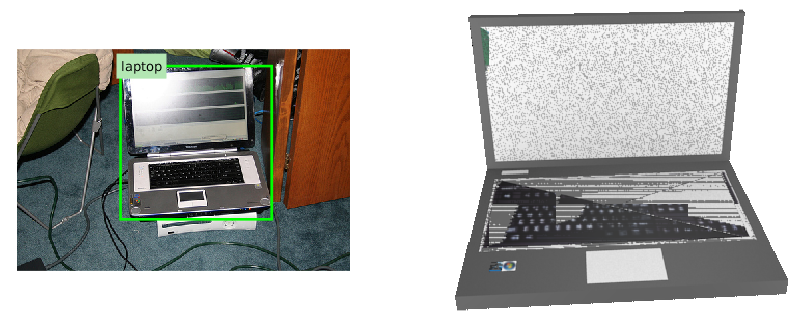

We contribute a large scale database for 3D object recognition, named ObjectNet3D, that consists of 100 categories, 90,127 images, 201,888 objects in these images and 44,147 3D shapes. Objects in the images in our database are aligned with the 3D shapes, and the alignment provides both accurate 3D pose annotation and the closest 3D shape annotation for each 2D object. Consequently, our database is useful for recognizing the 3D pose and 3D shape of objects from 2D images. We also provide baseline experiments on four tasks: region proposal generation, 2D object detection, joint 2D detection and 3D object pose estimation, and image-based 3D shape retrieval, which can serve as baselines for future research using our database. |

Publication

- Yu Xiang, Wonhui Kim, Wei Chen, Jingwei Ji, Christopher Choy, Hao Su, Roozbeh Mottaghi, Leonidas Guibas and Silvio Savarese. ObjectNet3D: A Large Scale Database for 3D Object Recognition. In European Conference on Computer Vision (ECCV), 2016. pdf, bibtex, technical report

Dataset

Results

- We provide our detection and pose estimation results on the validation set here ~ 920MB, where the network is trained on the train set with SelectiveSearch region proposals. The evaluation code for detection and pose estimation in our paper is included in the ObjectNet3D toolbox.

- For each line in the result file, the format is: image_id, x1, y1, x2, y2, score, azimuth, elevation, in-plane rotation, where x1, y1, x2, y2 are the upper left and lower right coodinates of the bounding box, score is the detection score, azimth, elevation and in-plane rotation are in [-pi, pi].

Note on 3D Object Reconstruction

- When ObjectNet3D is used for benchmarking 3D object reconstruction, we do NOT suggest using the 3D CAD models in ObjectNet3D for training, since the same set of 3D CAD models is used to annotate the test set. Using the 3D CAD models in both training and testing for 3D reconstruction will be biased.

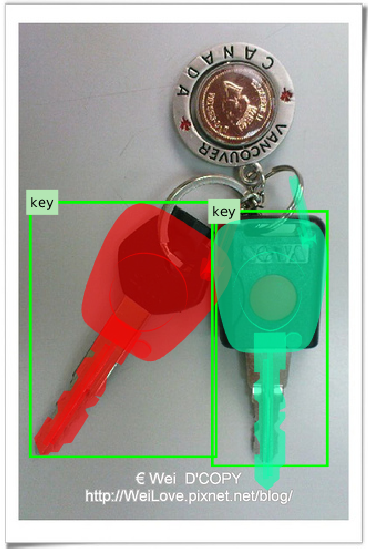

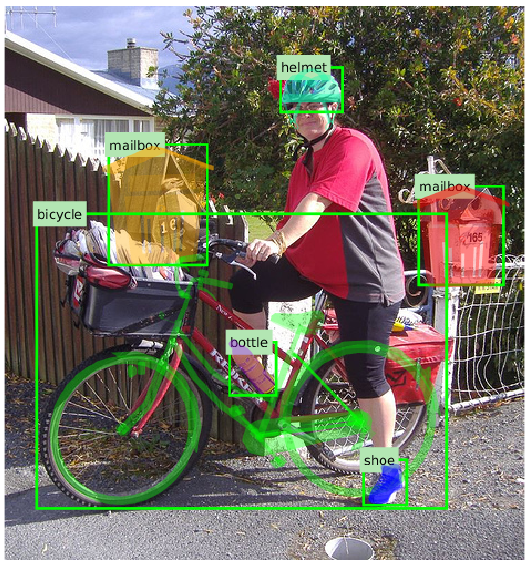

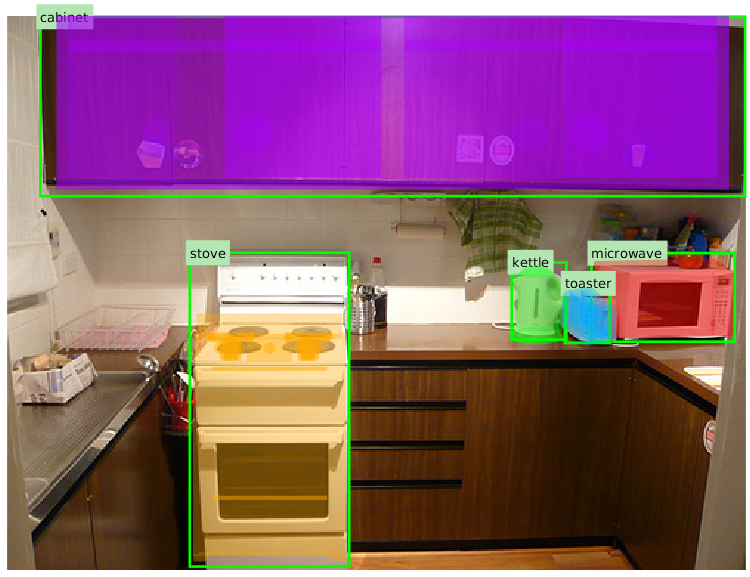

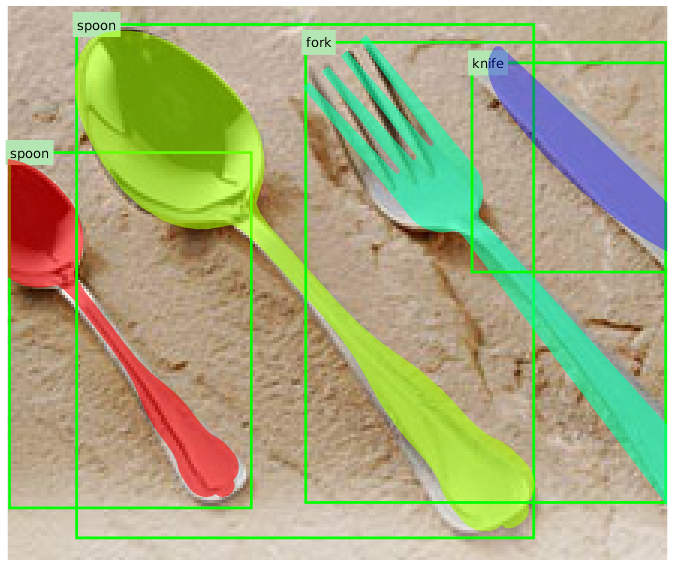

Annotation Demo

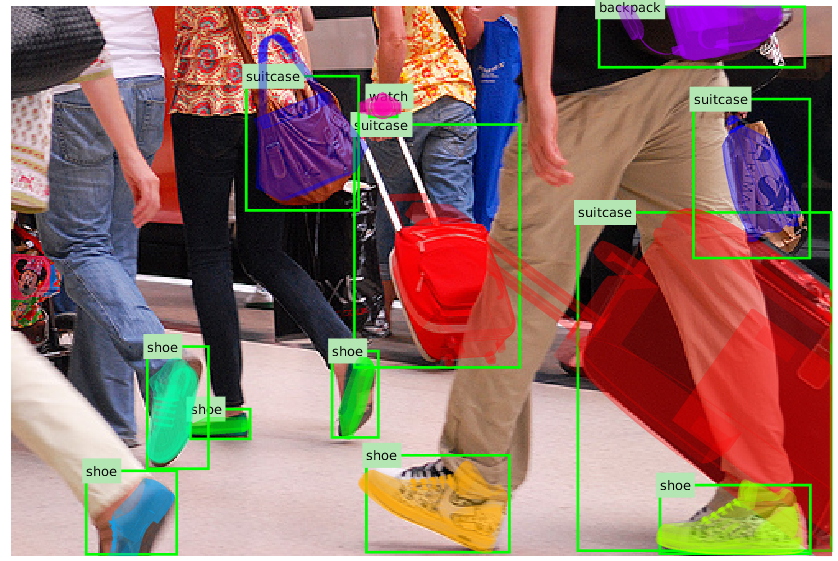

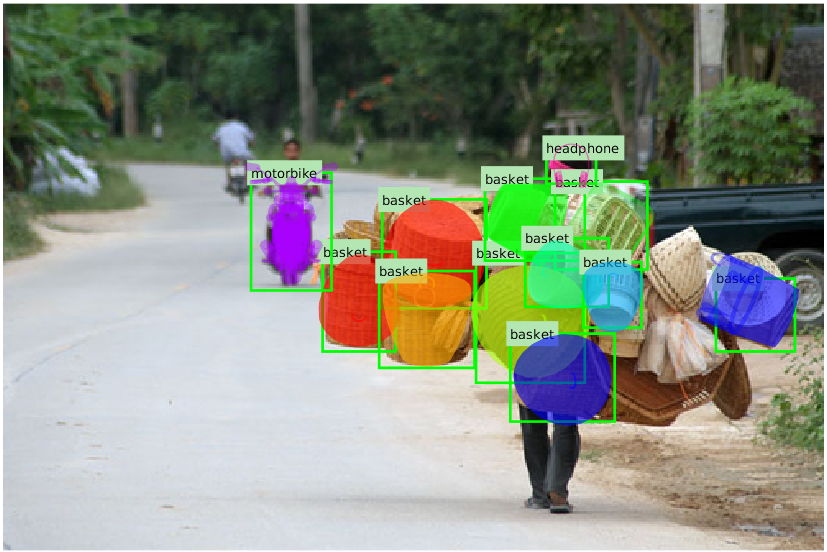

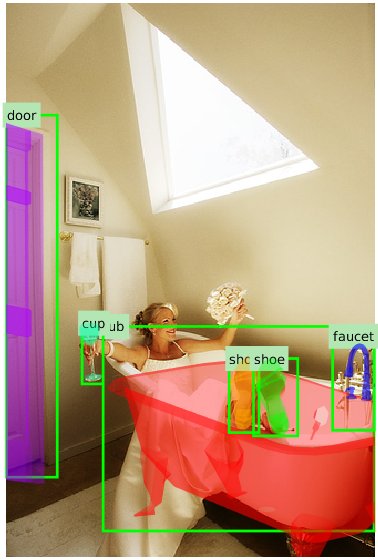

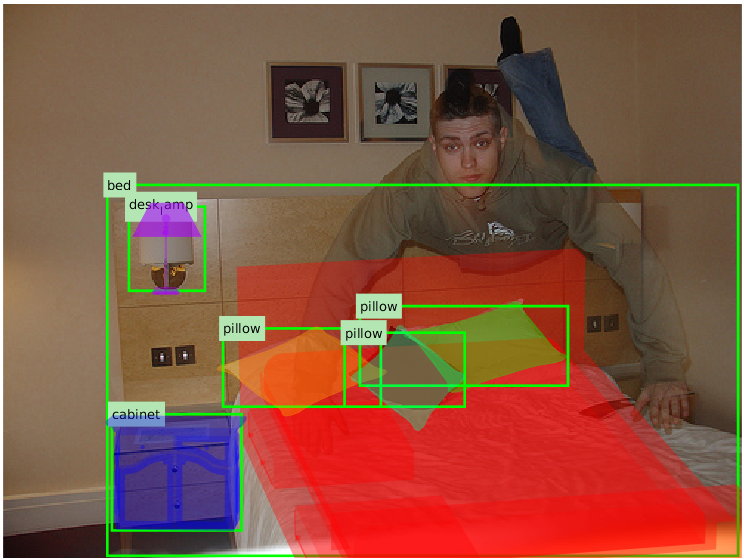

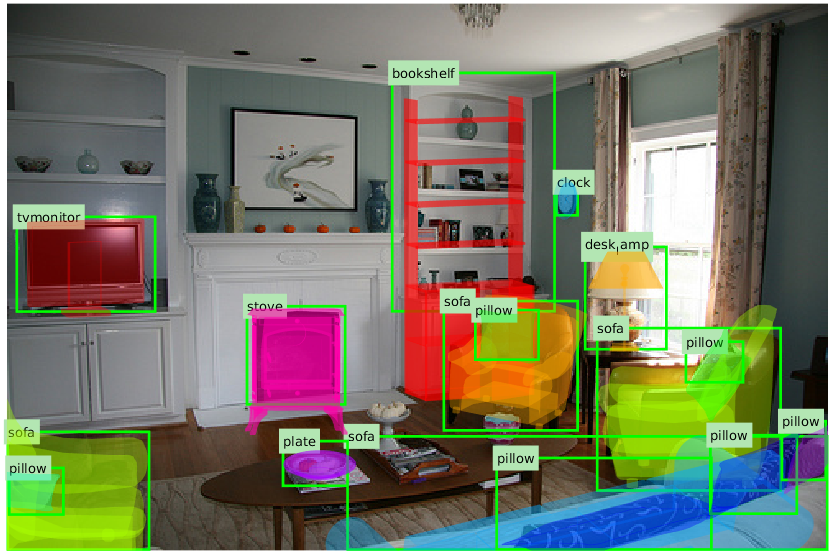

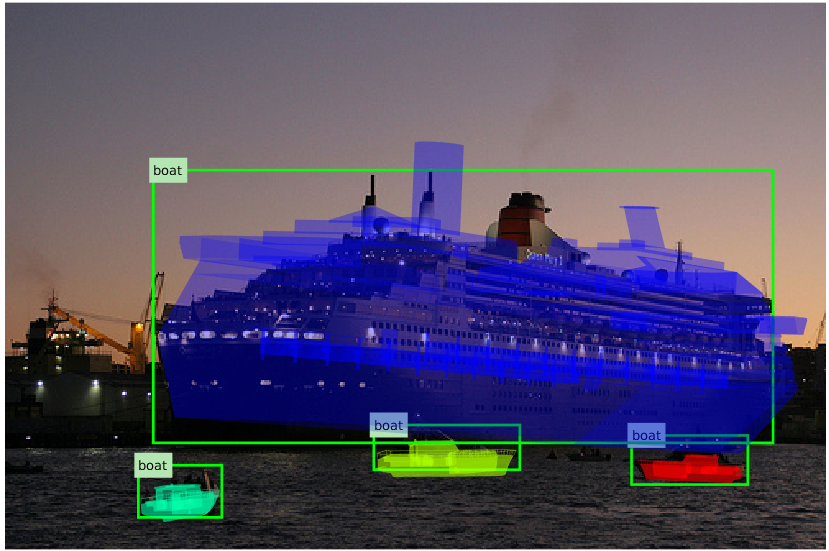

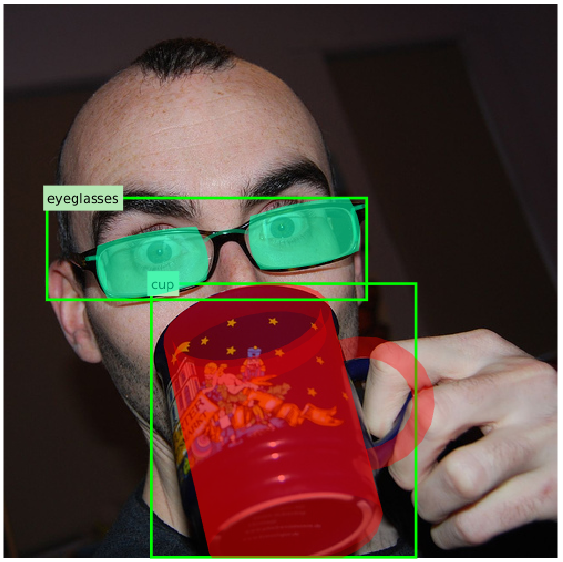

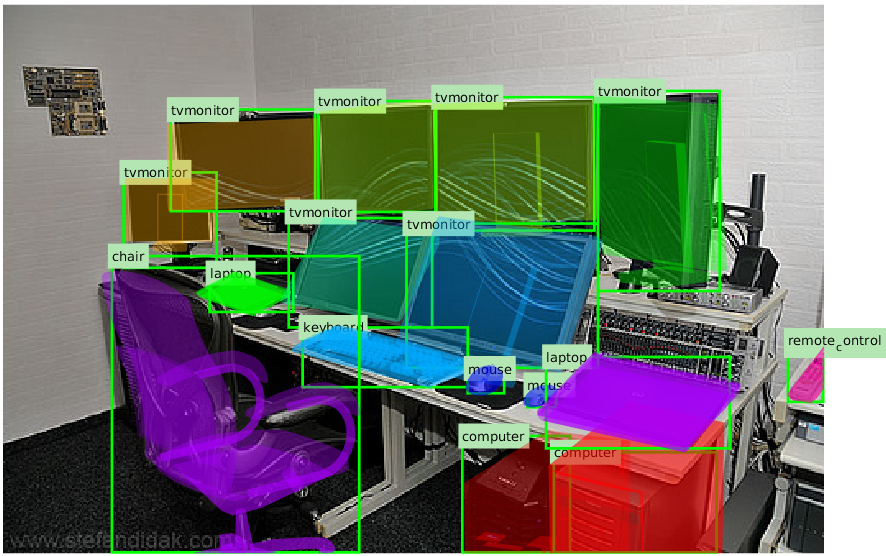

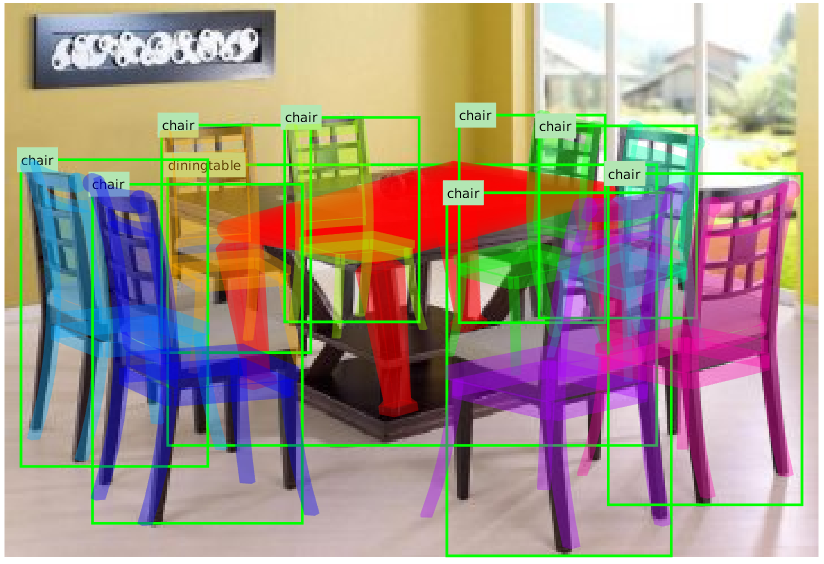

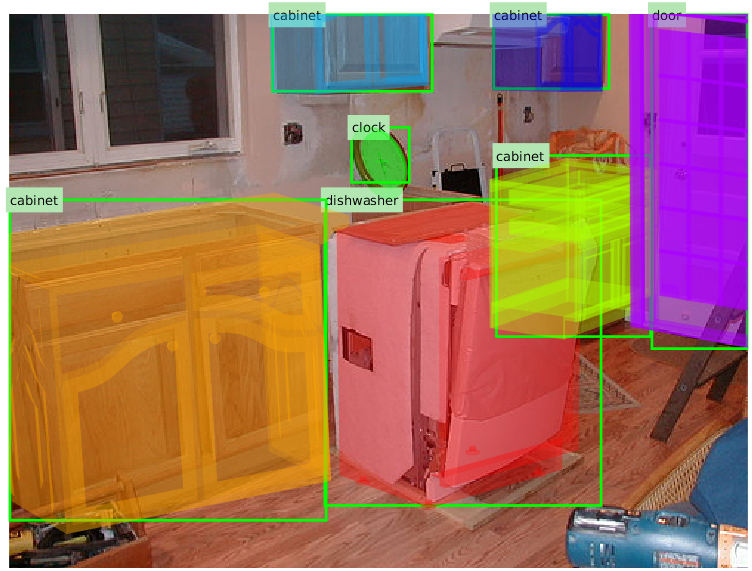

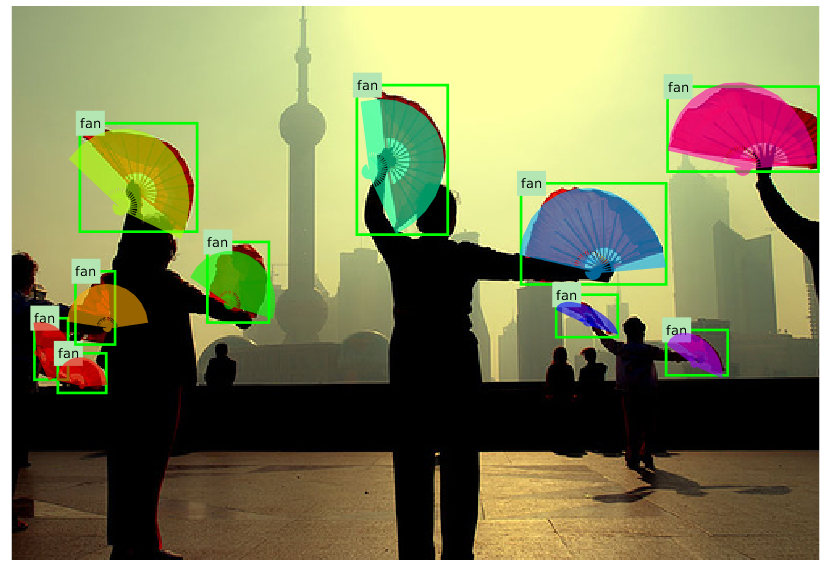

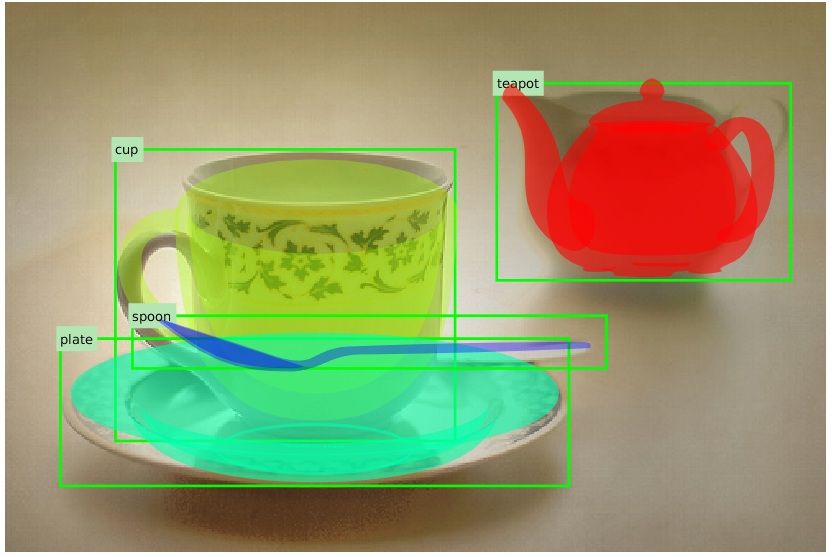

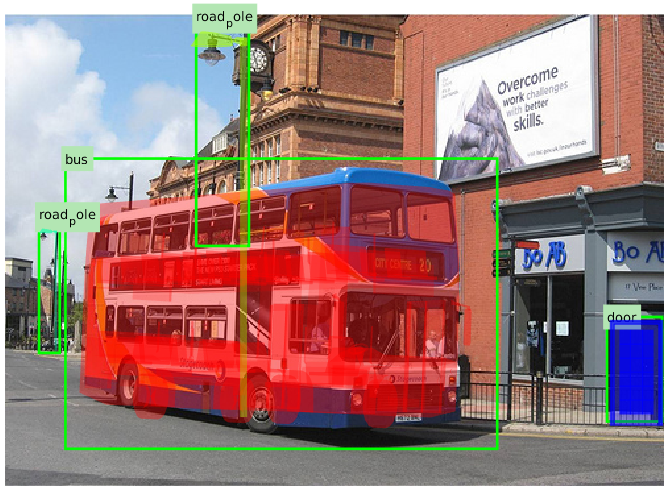

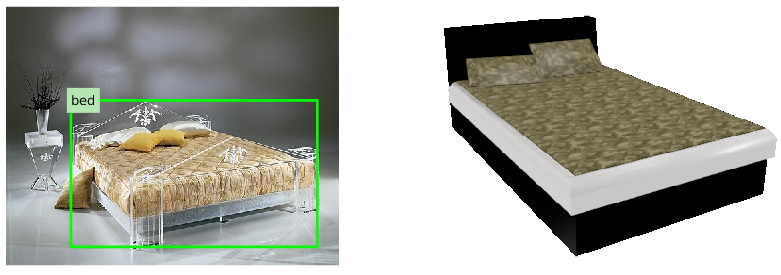

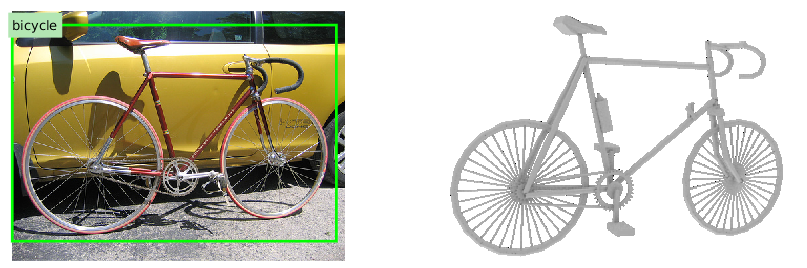

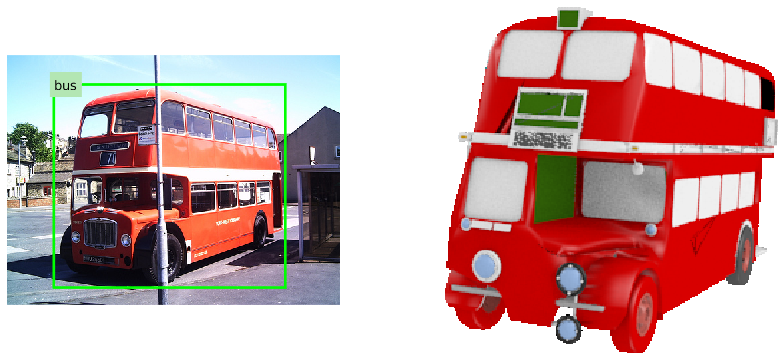

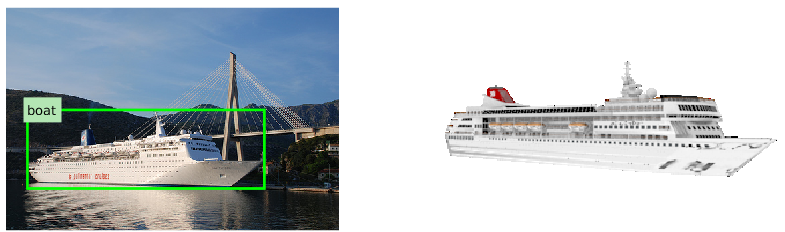

3D Pose Annotation Examples

|

|

|

|---|---|---|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

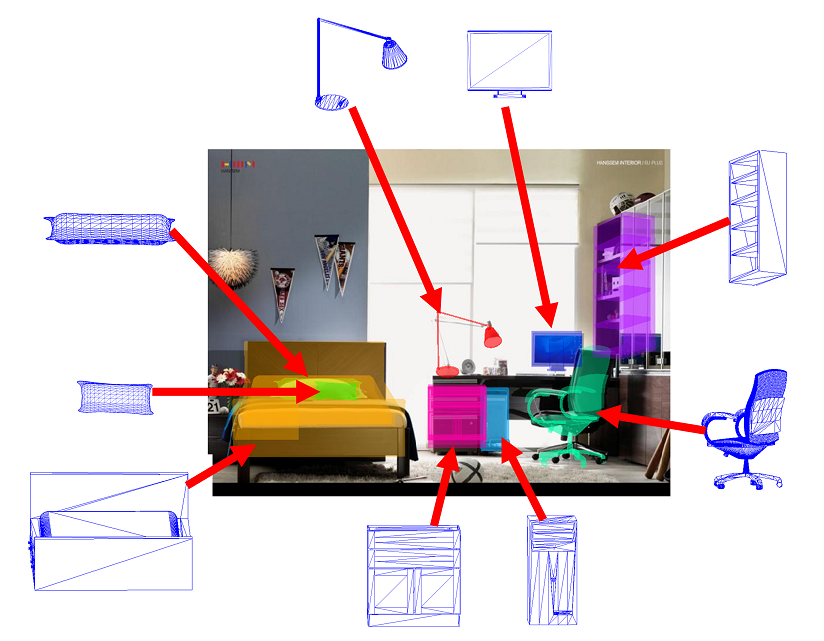

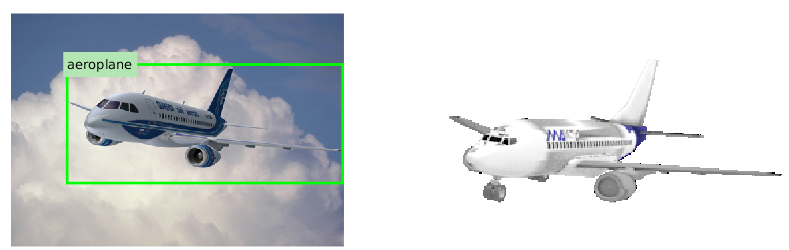

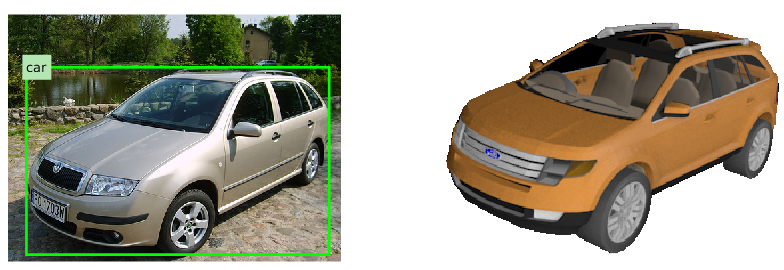

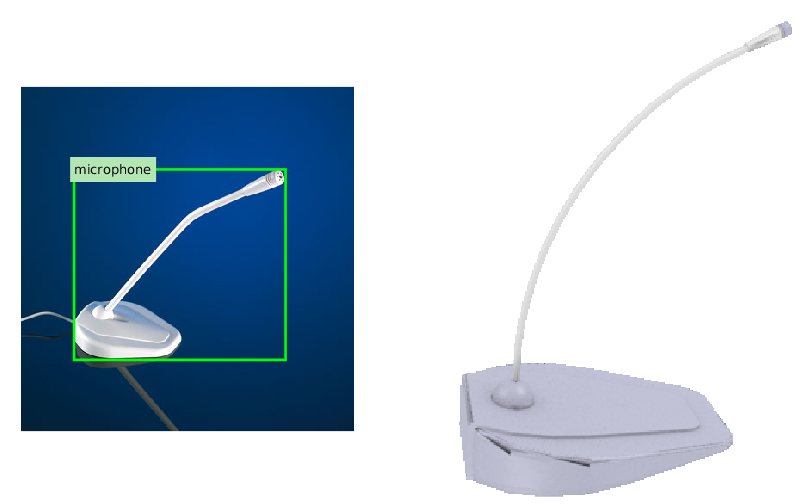

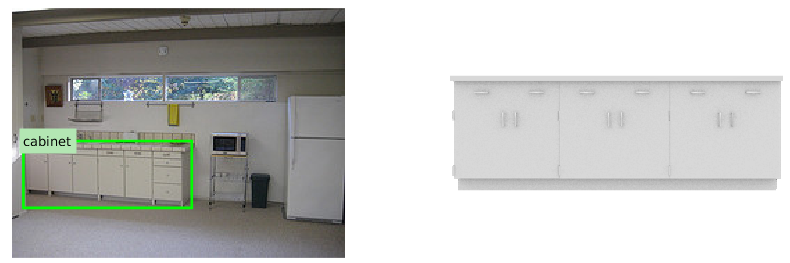

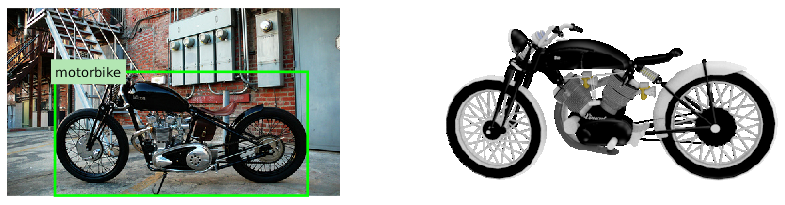

3D Shape Annotation Examples

|

|

|

|---|---|---|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

Acknowledgements

- We acknowledge the support of NSF grants IIS-1528025 and DMS-1546206, a Google Focused Research award, and grant SPO # 124316 and 1191689-1-UDAWF from the Stanford AI Lab-Toyota Center for Artificial Intelligence Research.

Contact : yuxiang at cs dot stanford dot edu

Last update : 6/29/2017