Introduction

Publication

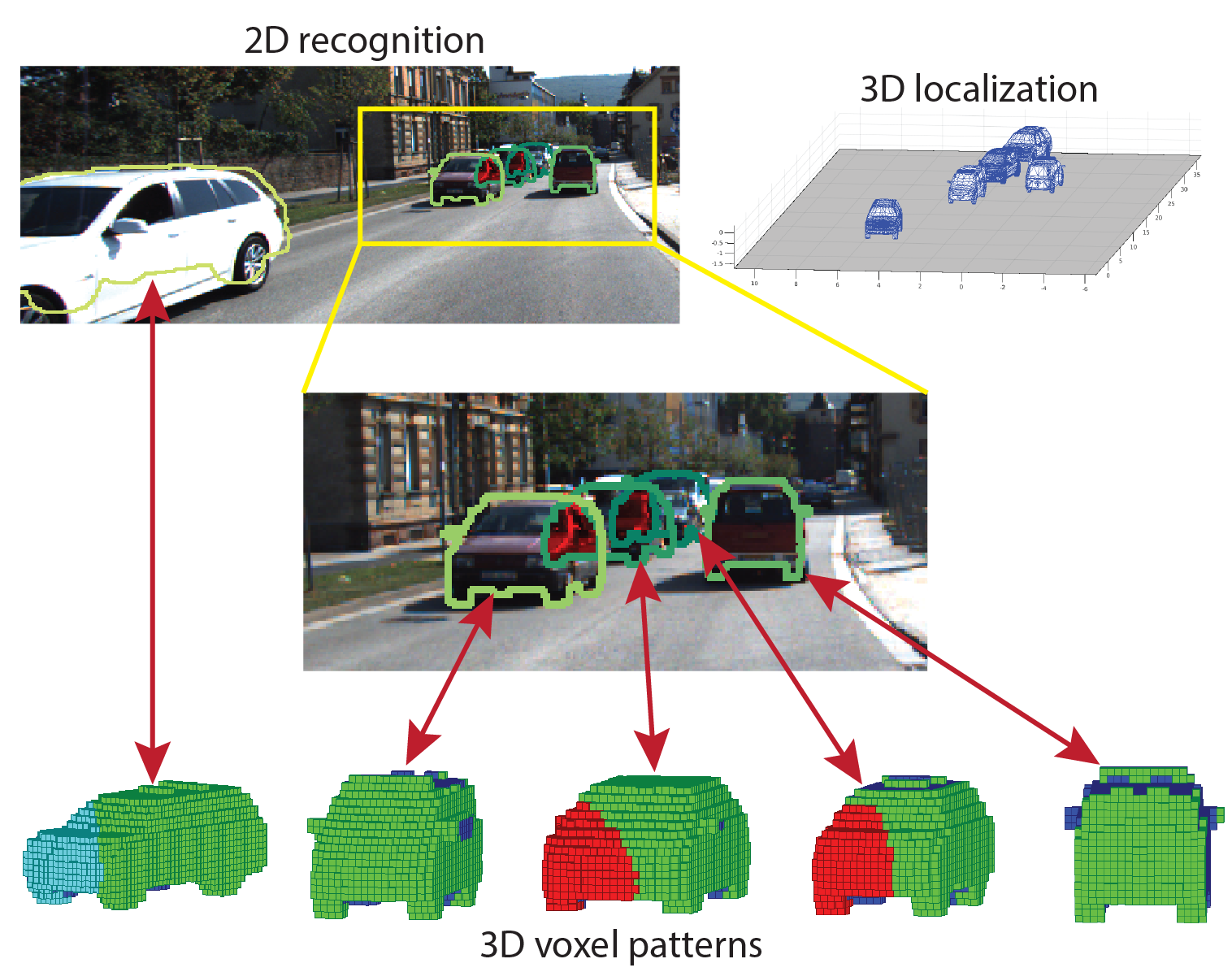

- Yu Xiang, Wongun Choi, Yuanqing Lin and Silvio Savarese. Data-Driven 3D Voxel Patterns for Object Category Recognition. In IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2015. pdf, bibtex, technical report, KITTI results, Code

Annotations

- The 3D voxel exemplar annotations we built from KITTI are here ~ 320M.

- For each car in the training set of the KITTI detection benchmark, we provide its 2D segmentation mask and its 3D voxel model by aligning a 3D car model to its 3D cuboid annotated by KITTI.

References

- A. Geiger, P. Lenz, and R. Urtasun. Are we ready for autonomous driving? the kitti vision benchmark suite. In CVPR, pages 3354–3361, 2012.

- Y. Xiang and S. Savarese. Object detection by 3d aspectlets and occlusion reasoning. In 3dRR, pages 530–537, 2013.

Acknowledgements

- We acknowledge the support of NSF CAREER grant N.1054127, ONR award N000141110389, and DARPA UPSIDE grant A13-0895-S002.

Contact : yuxiang at umich dot edu

Last update : 6/16/2015